China Digital Human Regulation: Four Scenarios for Global AI Governance

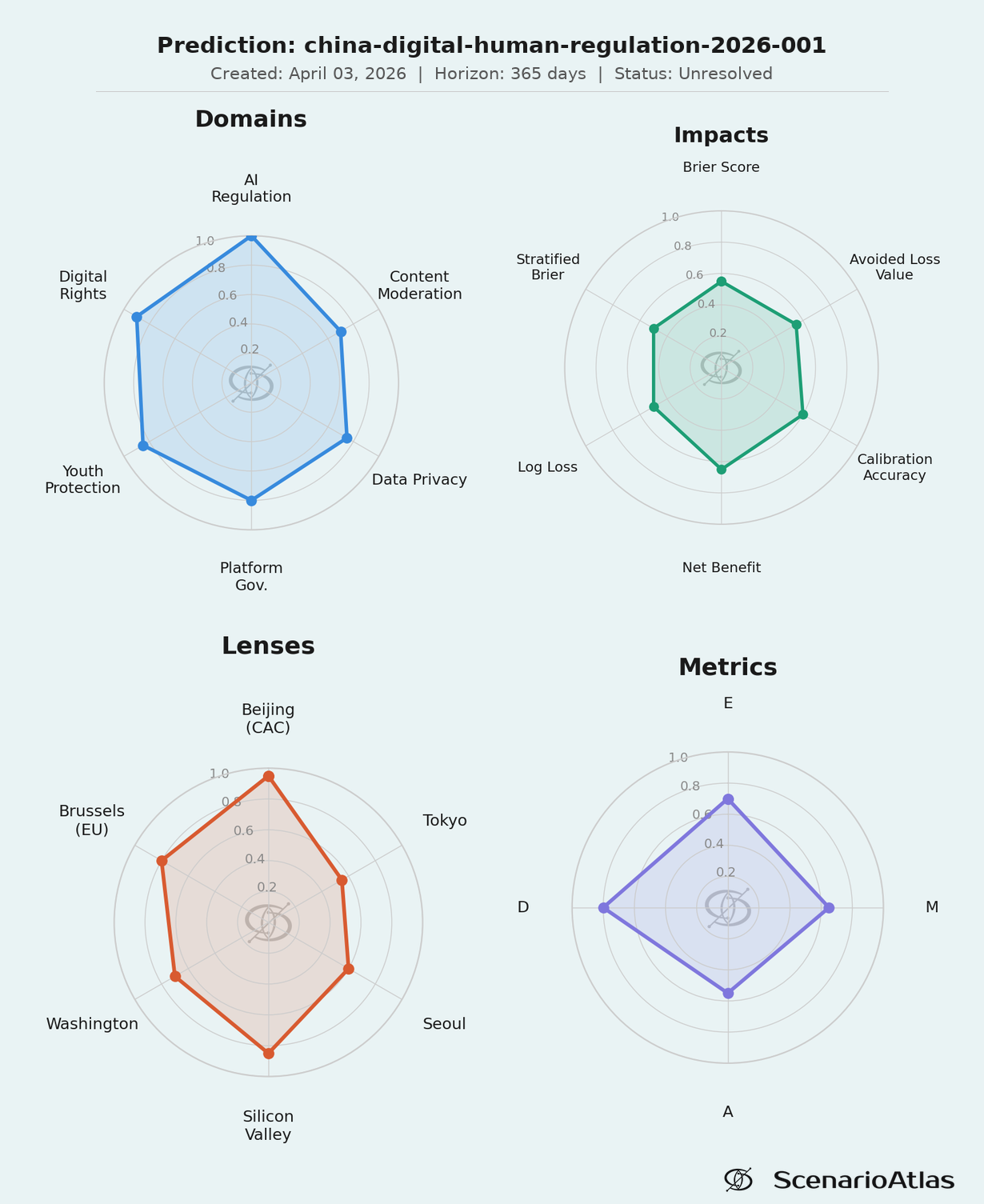

On April 3, 2026, China's Cyberspace Administration (CAC) released comprehensive draft rules for "digital humans" - AI-driven virtual personas capable of conversations, relationships, and personalized content. Key provisions: ban on virtual intimate relationships for minors, mandatory labeling, explicit consent for creation, and prohibition on bypassing identity verification. With 515M+ AI users in China and a 40% CAGR virtual influencer market, these rules will reshape the global AI landscape. Our D/E/M/A framework assigns 40% to selective global adoption, 30% to strict regulation, 20% to minimal adoption, 10% to an ethical AI movement. Confidence: 70%, L2.

Probability Scores

The April 3 Draft Rules: Key Provisions

| Provision | Requirement | Precedent |

|---|---|---|

| Minor protection | Ban virtual intimate relationships for <18 | First globally |

| Content labeling | All digital human content must be clearly labeled | Sept 2025 AI labeling rules |

| Consent | Explicit consent required for creating digital humans using personal info | GDPR-like |

| Identity verification | Cannot bypass identity verification systems | Anti-fraud focus |

| Content controls | No national security threats, secession, explicit content | Standard PRC content law |

| Platform responsibility | Intervene on self-harm/suicide signals | Novel requirement |

The regulations arrive at a moment when AI companion services have gained significant commercial traction. Character.AI, Replika, and Chinese equivalents have millions of users forming emotional bonds with AI personas. The draft rules explicitly target "emotional dependency" - a novel regulatory category that no Western jurisdiction has yet addressed directly.

Four Scenarios with Global Impact

■ Selective Adoption

Triggers: EU partial adoption, tech adaptation to regional rules

AI companies: Region-specific products, versatile compliance

Virtual influencers: Growth varies by region

Spillover: Heterogenous global landscape

■ Strict Global Regulation

Triggers: Major AI misuse incidents, deepfake crisis

AI companies: Increased compliance costs, verification required

Virtual influencers: Investment slowdown, decreased growth

Spillover: Fast-track adoption in China-aligned countries

■ Minimal Global Adoption

Triggers: US opts for lenient framework, industry lobbying

AI companies: Focus on minimal-regulation regions

Virtual influencers: Flourishes in less-restricted markets

Spillover: Fragmented international standards

■ Ethical AI Movement

Triggers: Ethical breach crisis, NGO advocacy success

AI companies: Ethics becomes competitive advantage

Virtual influencers: Compliance fuels growth

Spillover: International bodies streamline standards

Why This Matters: The Emotional Dependency Problem

The most significant aspect of these rules is the explicit focus on emotional dependency - particularly for minors. AI companions like Character.AI and Replika have demonstrated that humans readily form emotional bonds with conversational AI. Reports of teenagers developing unhealthy attachments to AI personas have emerged in multiple countries.

China's approach - banning "virtual intimate relationships" for minors - is the first direct regulatory response to this phenomenon. The question is whether Western regulators will follow, or whether the US in particular will continue its pattern of prioritizing innovation over precautionary regulation.

Base Rates: Regulatory Implementation

| Precedent | Implementation Rate | Timeline |

|---|---|---|

| China Deep Synthesis Provisions 2023 | 85% | ~1 year to full enforcement |

| EU AI Act | TBD | 2-3 years from proposal to enforcement |

| US AI regulation (federal) | Low | Industry self-regulation preferred |

| China data protection | 90% | Fast track for security-related rules |

China's track record on AI regulation implementation is strong. The 2023 Deep Synthesis Provisions (deepfake rules) were implemented with ~85% compliance within a year. The September 2025 AI labeling rules took effect on schedule. We expect these digital human rules to follow the same pattern - full domestic implementation within 12 months of final publication.

D/E/M/A Uncertainty Decomposition

D (Data Quality: 0.80) - The draft rules are public and detailed. CAC implementation history is well-documented. Market size data is reliable (515M+ users).

E (Epistemic: 0.70) - Reducible uncertainty includes: final rule modifications after comment period, enforcement priorities, industry adaptation strategies. Better data on teen AI companion usage patterns would narrow ranges.

M (Model: 0.65) - This is a novel regulatory category. No direct precedent for "emotional dependency" regulation exists. Our model draws analogs from content moderation and child protection frameworks, but fit is imperfect.

A (Aleatoric: 0.55) - Irreducible randomness includes: viral AI companion incidents that trigger Western regulatory response, breakthrough AI capabilities that change the landscape, geopolitical events that accelerate or delay adoption.

What to Watch

Resolution Tracking

| Prediction | Deadline | Our Call | Outcome | Correct? |

|---|---|---|---|---|

| China final rule publication | Q3 2026 | Published with minor modifications | TBD | TBD |

| EU similar provisions | 2027 | Partial adoption (30%) | TBD | TBD |

| US federal action | 2027 | Unlikely (<20%) | TBD | TBD |

| Major AI companion incident | 2026 | 40% probability | TBD | TBD |

Bottom Line

China is first-mover on regulating AI emotional dependency. The April 3 draft rules represent the most comprehensive digital human framework globally. Domestic implementation is near-certain (85%+ based on CAC track record). Global spillover is the key uncertainty: our base case (40%) is selective adoption where EU adopts partial elements while US remains permissive. The 30% strict global regulation scenario requires a triggering incident - a teen suicide linked to AI companion, a deepfake political crisis, or similar. For AI companies, the strategic question is whether to build compliant-by-default products now or wait for regulatory clarity that may never come.

Every prediction scored. Every miss published.